Quoting: Why Small-Group Quoting Is Harder This Season ?

There’s a specific kind of pressure underwriting teams are feeling right now. It doesn’t always show up in dashboards. It shows up in longer review cycles, in emails marked “urgent,” in spreadsheets with five tabs instead of two. Small-group quoting hasn’t exploded overnight, it has thickened. Each submission carries extra weight. Each revision pulls in another variable. The work hasn’t changed in name, but it has changed in texture.

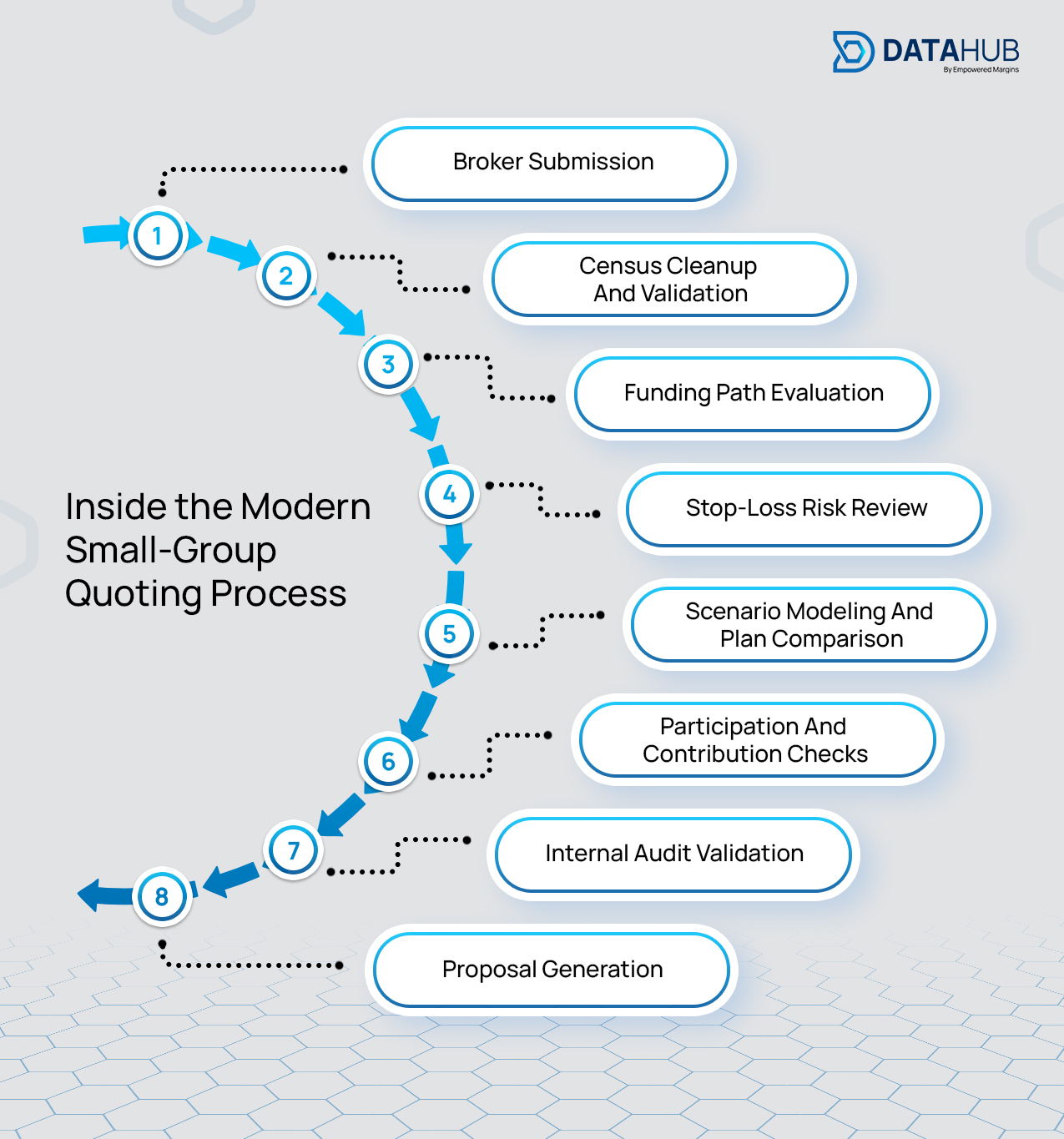

On the surface, the small-group market still looks familiar. Employers are shopping. Brokers are presenting options. Carriers are competing. But inside underwriting departments, the quoting workflow tells a different story. What used to move in a relatively straight line now branches, loops, and doubles back. The difficulty this season isn’t about handling volume alone, it’s about managing complexity that stacks at every stage of the quote lifecycle.

The Funding Conversation Is No Longer Linear

Small employers are no longer reviewing a single fully insured proposal and making a decision. Rising premium pressure and tighter contribution budgets have shifted broker conversations toward alternative funding strategies. It’s common to see fully insured quotes evaluated alongside level-funded structures, MEWA arrangements, and regional carrier options within the same request.

Each funding model introduces its own underwriting logic. Stop-loss assumptions vary. Participation thresholds shift. Contribution requirements change. Documentation standards differ. What once required a single pricing path now demands parallel modeling tracks that must stay aligned internally and defensible externally.

This structural branching is what increases cognitive load. Underwriters aren’t simply adjusting rates; they’re managing interconnected scenarios where a small demographic shift in the census may affect each funding type differently. Maintaining consistency across those paths takes deliberate coordination, especially when revisions enter the picture.

Data Friction Is Slowing the Entire Workflow

The quoting challenge often begins before underwriting analysis even starts. Census files arrive in inconsistent formats. Dependent tiers don’t match plan structures. Eligibility dates are missing. Claims histories are embedded inside PDFs that require manual extraction. Contribution splits change midway through evaluation.

Individually, these issues seem manageable. Collectively, they slow intake and create unnecessary back-and-forth. Skilled underwriters spend valuable time restructuring spreadsheets instead of evaluating risk. Every manual data touchpoint increases the chance of inconsistency between internal models and external proposals.

This is where DataHub’s AI-Enabled SmartExtractor™ directly improves workflow stability. Instead of re-keying data from multiple sources, SmartExtractor™ captures census, claims, and plan information and structures it into standardized formats at intake. Missing fields surface early. Formatting inconsistencies are resolved before modeling begins. By cleaning and organizing data at the entry point, underwriting teams reclaim time and reduce downstream correction cycles.

Stop-Loss Evaluation Has Become More Deliberate

For level-funded and MEWA structures, stop-loss assumptions are receiving heightened attention this season. Employers want cost predictability. Brokers want competitive positioning. Internal risk leaders want pricing defensibility.

As a result, underwriters are modeling scenarios with greater precision. Large claimant history receives deeper analysis. Risk thresholds are debated internally before approval. Groups that once qualified as straightforward submissions now require careful interpretation.

This deliberation isn’t a weakness, it’s responsible underwriting. But it does extend review time unless the surrounding process is structured.

DataHub’s SmartRules™ Engine embeds underwriting guidelines directly into the workflow. Participation requirements, contribution minimums, and state-specific nuances trigger automatically during intake. Exceptions surface immediately instead of appearing at final review. That early validation reduces revision loops and prevents late-stage compliance issues from delaying release.

Spreadsheet-Based Modeling Is Reaching Its Limits

When brokers request side-by-side comparisons across funding structures, spreadsheet workflows become fragile. Each adjustment requires manual recalculation. Version control becomes difficult to track. Small edits ripple unpredictably across tabs.

The issue isn’t analytical capability, it’s structural inefficiency. As scenario variations increase, spreadsheets become harder to maintain without introducing discrepancies.

DataHub’s SmartPlan™ Comparison Tool centralizes modeling within a controlled interface. Plan variables are structured, adjustments are parameter-driven, and comparisons remain aligned automatically. Underwriters can evaluate multiple funding paths without rebuilding frameworks each time assumptions shift. That consistency preserves accuracy while accelerating scenario development.

Internal Quality Control Is Now Critical

During peak quoting cycles, fatigue amplifies risk. A missed contribution threshold or a misplaced census field can trigger rework, broker dissatisfaction, or compliance exposure. Internal quality checks are no longer optional safeguards; they are necessary controls.

The Smart Audits Tool within DataHub continuously reviews submissions for inconsistencies, missing documentation, and rule conflicts before quotes are finalized. Instead of relying solely on manual review, underwriting teams benefit from embedded validation that protects accuracy during high-volume periods.

Once approval is secured, proposal turnaround often becomes the final bottleneck. Transferring data from internal models into broker-facing documents introduces another opportunity for discrepancy. DataHub’s Smart Proposal™ generates structured outputs directly from validated underwriting data, eliminating manual copying and reducing release time.

The Core Reality: Operational Density Has Increased

Small-group quoting isn’t harder because underwriting judgment has weakened. It’s harder because operational density has increased across every stage of the process. Funding diversification has multiplied modeling paths. Data quality inconsistencies require structured intake. Stop-loss scrutiny has intensified internal discussions. Broker expectations for responsiveness remain high.

When these factors converge, the workflow stretches. Teams feel pressure not because they lack expertise, but because their systems weren’t designed for this level of interconnected complexity.

DataHub addresses this by treating quoting as a coordinated workflow rather than a sequence of isolated tasks. Structured intake reduces cleanup. Embedded rules prevent late-stage surprises. Centralized modeling maintains consistency. Automated audits protect accuracy. Proposal generation shortens delivery time.

The market may continue evolving. Funding conversations will keep expanding. Risk evaluation will always require careful thought. But when the operational foundation is stable, underwriting teams regain control over pace and precision.

This season hasn’t simply increased activity. It has deepened the structure of each submission. And navigating that structure effectively requires systems built for the way small-group quoting now truly operates.